Designing Responsibility in Times of Conflict

In an era where generative AI can create hyper-realistic text, images, and even entire narratives, UX design is no longer just about usability—it’s about responsibility at scale.

Nowhere is this more critical than in conflict zones like the Middle East, where information is volatile, emotions are heightened, and the line between truth and manipulation is increasingly blurred.

This is where Ethical UX becomes not just a discipline—but a duty.

The New Power of Generative AI

Generative AI systems can produce content that is indistinguishable from reality—news articles, satellite images, videos, and social media posts. While this unlocks creativity and efficiency, it also introduces serious risks:

- Misinformation and deepfakes

- Bias amplification

- Privacy violations

- Manipulated narratives

Research shows that generative AI raises major concerns around bias, misinformation, and data ethics, all of which directly impact user trust and societal stability .

In conflict scenarios, these risks are amplified.

When UX Meets War: The Middle East Context

Modern conflicts are no longer fought only on the ground—they are fought through interfaces.

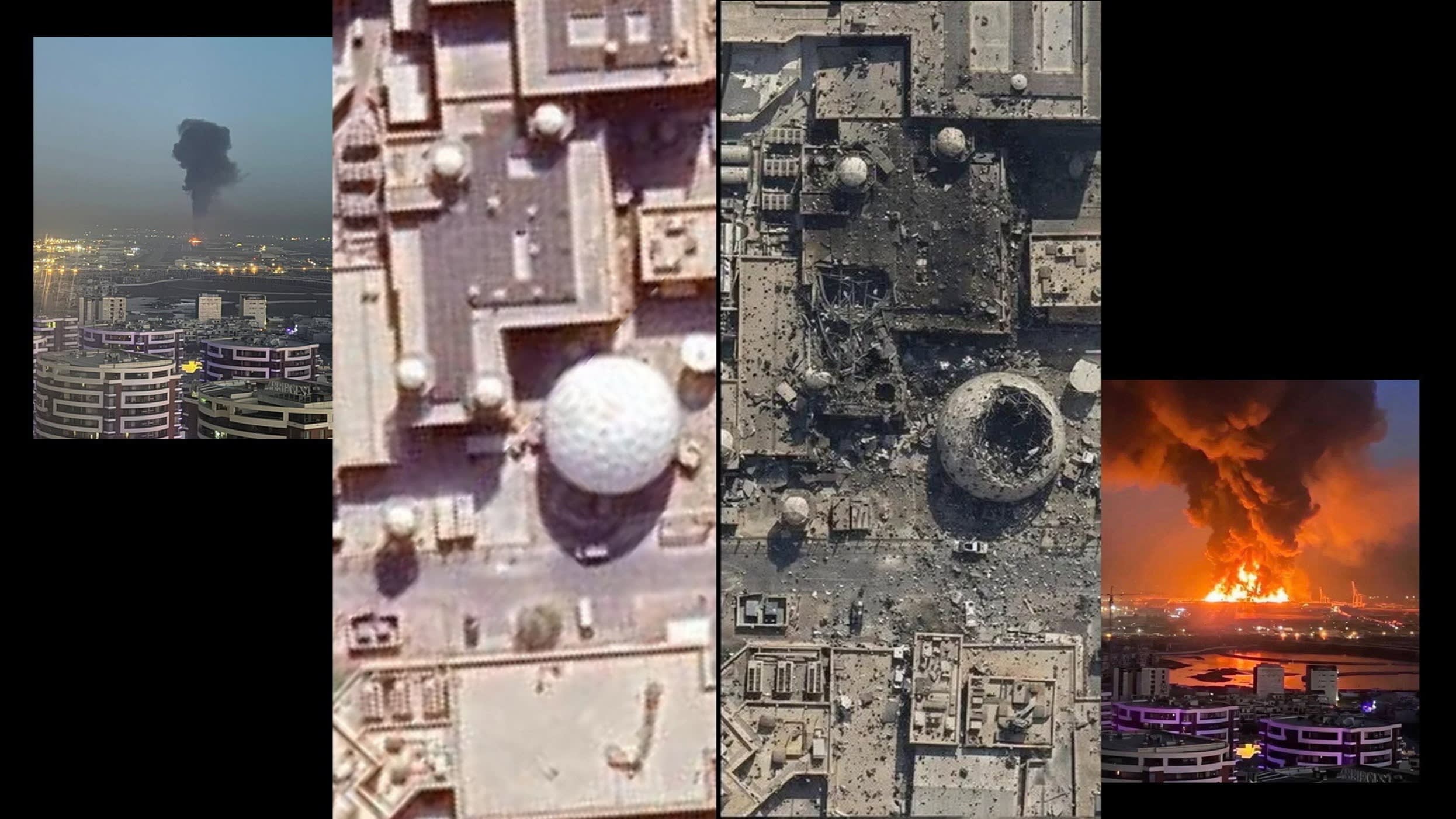

During ongoing tensions in the Middle East, AI-generated content has already been used to distort reality.

- Fake satellite imagery and manipulated visuals spread rapidly

- Social media platforms amplify emotionally charged AI-generated content

- Users struggle to distinguish truth from fabrication

This creates a dangerous UX problem:

Users are interacting with interfaces that feel trustworthy—but may not be.

As one expert noted, AI has made it significantly easier to fabricate convincing war visuals, making it harder for audiences to verify authenticity .

The Ethical UX Challenge

Traditional UX goals—clarity, efficiency, delight—are no longer enough.

Designers must now ask:

- Is this interface truthful?

- Does it protect users from harm?

- Does it enable informed decision-making?

Ethical UX in AI systems revolves around three core pillars:

1. Transparency

Users must understand:

- When AI is being used

- How outputs are generated

- What limitations exist

Lack of transparency erodes trust and leads to misuse .

2. Fairness & Bias Mitigation

AI systems often reflect historical and cultural biases embedded in data.

In geopolitical conflicts, this can:

- Skew narratives

- Reinforce propaganda

- Marginalize certain voices

UX designers must actively design for balanced representation and context.

3. User Autonomy

Interfaces should empower users—not manipulate them.

Dark patterns in AI (like auto-generated persuasive content) can:

- Influence opinions subconsciously

- Amplify fear or outrage

- Reduce critical thinking

Ethical UX ensures users remain in control of interpretation and action.

UX Failures in Generative AI Systems

Many current AI interfaces fall short:

- Confident but incorrect answers

- Lack of source attribution

- Poor feedback mechanisms

- Inaccessible or unclear outputs

These usability flaws are not just inconvenient—they are ethically dangerous, especially when users rely on AI during crises .

In war-related contexts, a misleading interface can shape public perception, policy opinions, and even real-world actions.

Designing Ethical UX for Conflict-Aware AI

So what should designers do?

1. Design for Verification

- Show sources and confidence levels

- Highlight uncertainty clearly

- Provide fact-checking pathways

2. Build Friction Where It Matters

Not every UX should be frictionless.

For sensitive content:

- Add warnings for unverified media

- Slow down sharing mechanisms

- Prompt users to reflect before engaging

3. Contextualize Information

- Add timelines, geography, and background

- Avoid isolated, decontextualized AI outputs

4. Make AI Visible

“Invisible AI” can be dangerous in high-stakes scenarios.

Users should always know:

- This content is AI-generated

- This may not reflect reality

5. Design for Emotional Awareness

Conflict content is emotionally charged.

UX should:

- Avoid sensationalism

- Reduce anxiety loops (doomscrolling)

- Promote balanced exposure

The Role of Designers: From Creators to Guardians

UX designers are no longer just shaping products—they are shaping perception, truth, and trust.

In the age of generative AI:

- Every interface is a media channel

- Every interaction is a potential influence point

And in conflict zones:

- Every design decision can have real-world consequences

Conclusion: Ethics Is the New UX

As generative AI becomes deeply embedded in how we consume information, especially during global conflicts, the role of UX must evolve.

Ethical UX is not a feature—it’s a foundation.

Because in a world where AI can generate anything,

design is what determines what users believe.